Conductor

A new modality for shaping sounds using hand gesturesChallenge

Modern synthesizers have a myriad of parameters and finding the right sound can be difficult. So to address this problem my friend Nicolas Huynh Thien and I asked ourselves: How might we use real-time hand-tracking to make synthesizer configuration more intuitive.

Outcome

The outcome of our exploration is a software tool we call Conductor. Conductor is a new modality that allows musicians to control midi-enabled software and instruments using hand gestures.

The Process

Understand

We observed that with only two hands it can be difficult to play and explore sounds at the same time. We also noted that all existing solutions require costly hardware (such as the leap motion). So we decided to focus on a software-based solution running directly in the web browser.

Design

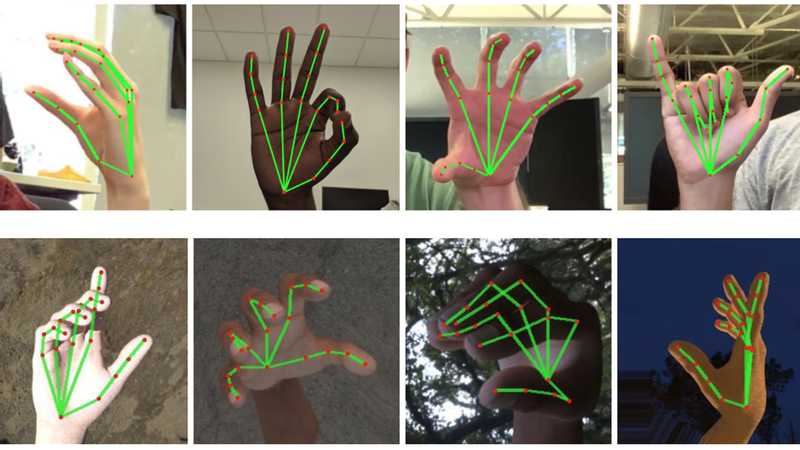

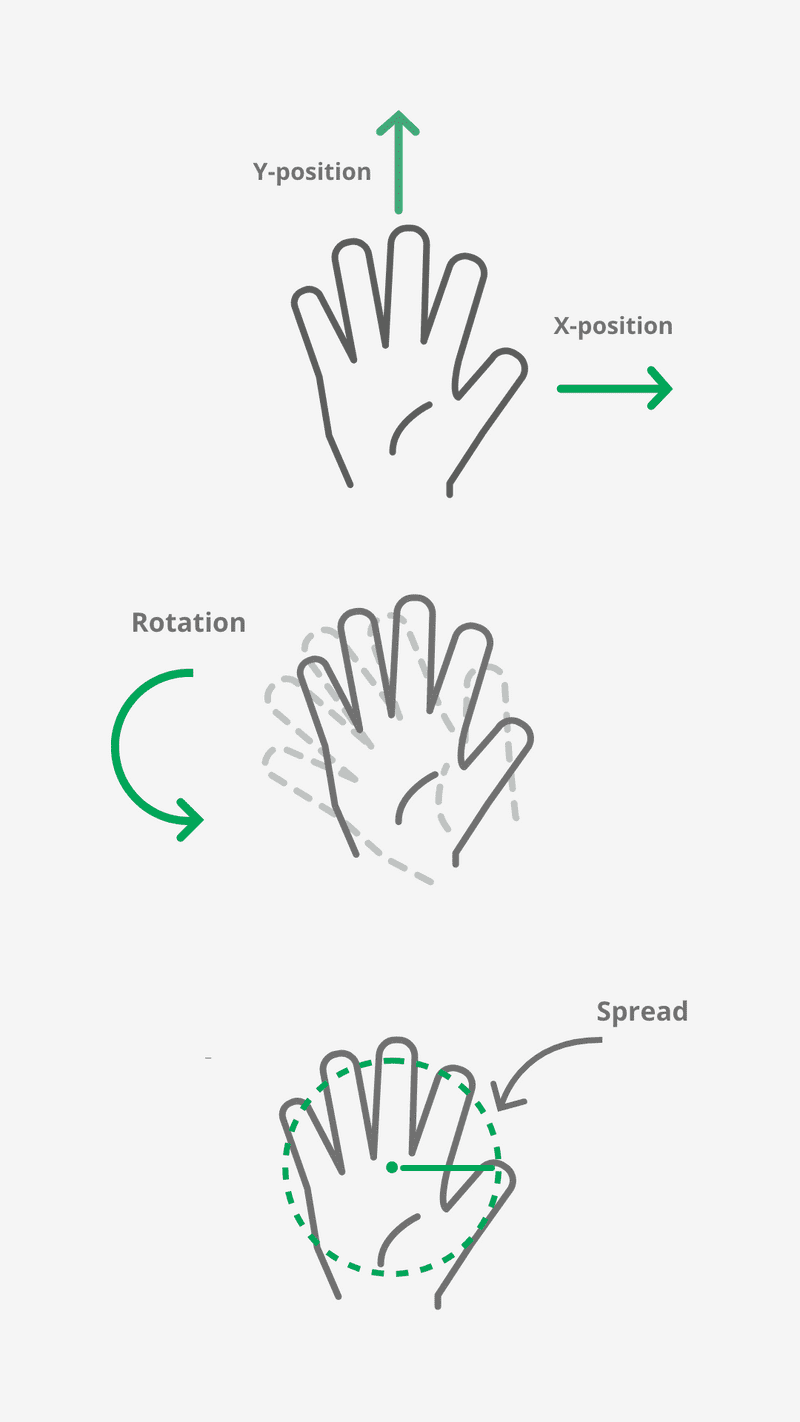

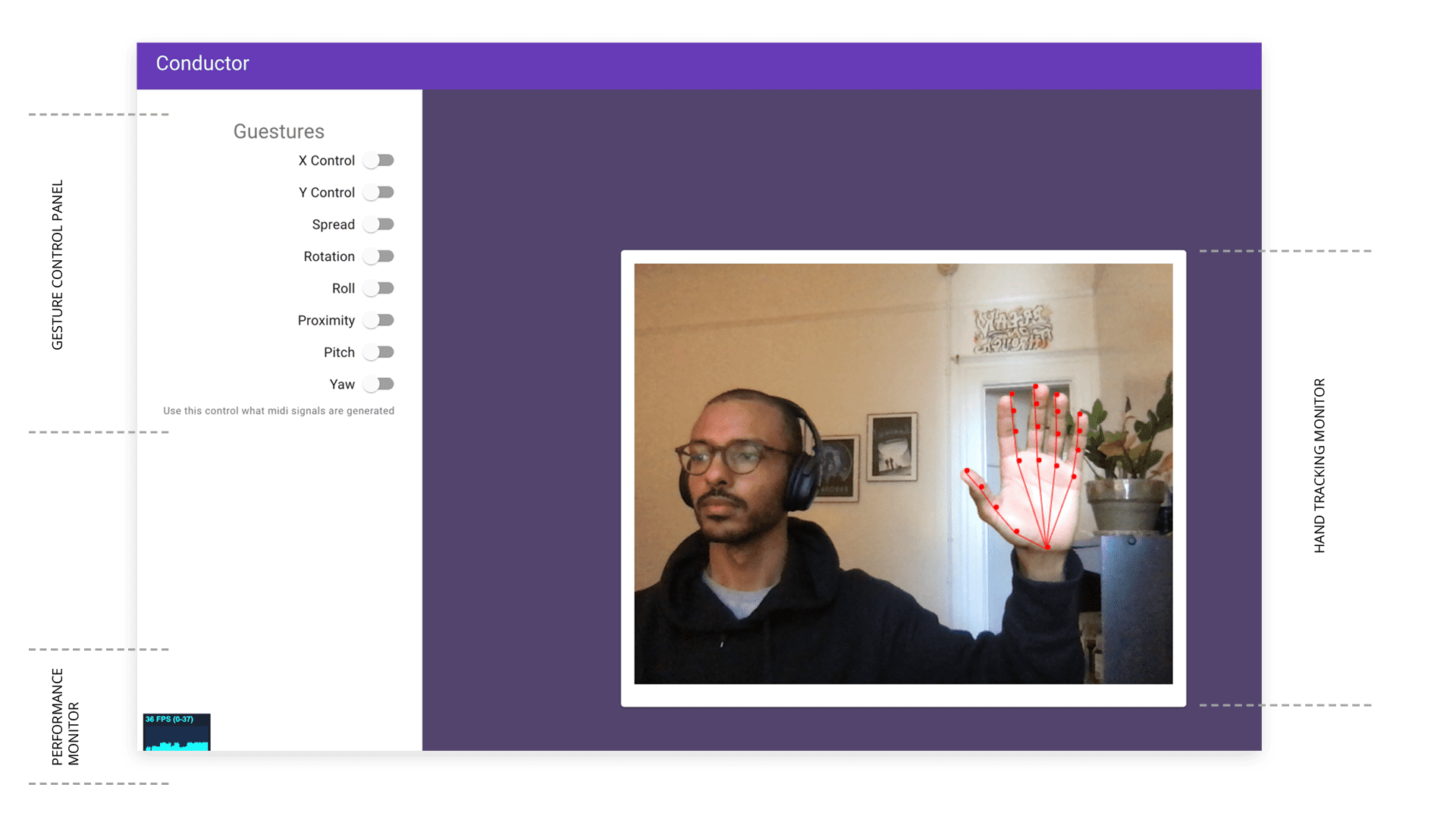

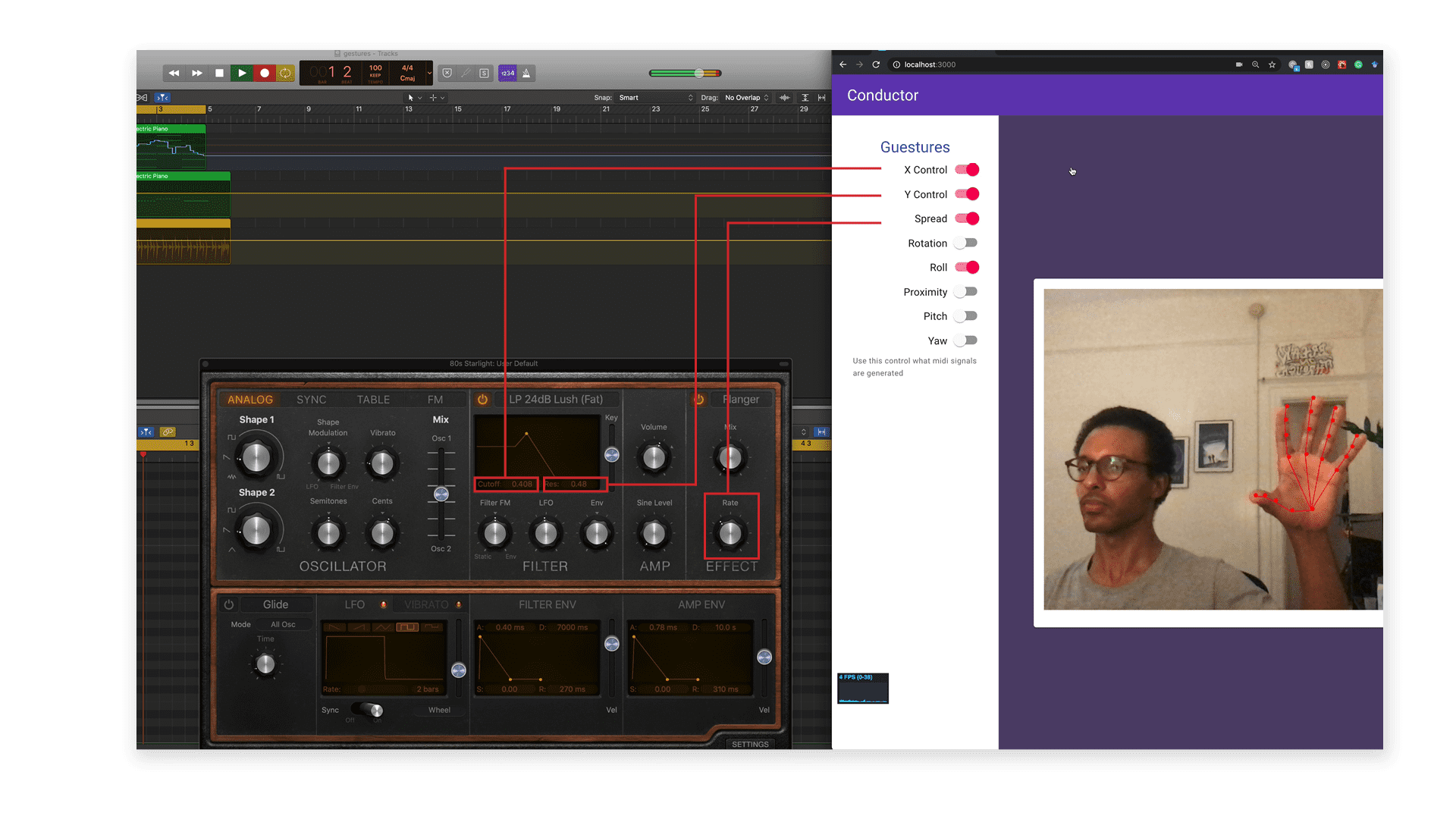

Using real-time hand-tracking we were able to read several key landmarks of the users hand. From these values we derived other hand orientation values such as rotation, spread, pitch, yaw, roll etc. Finally we mapped these derived oritentation values to midi control change messages, effectively turning the users hand into a multi-dimensional mouse.

Prototype

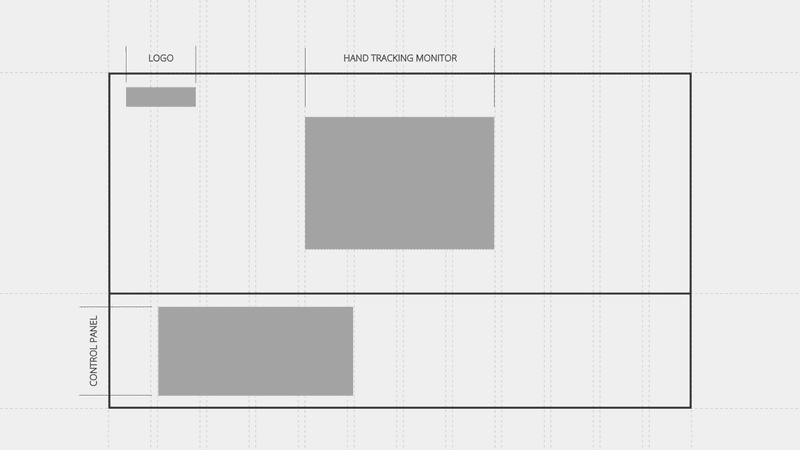

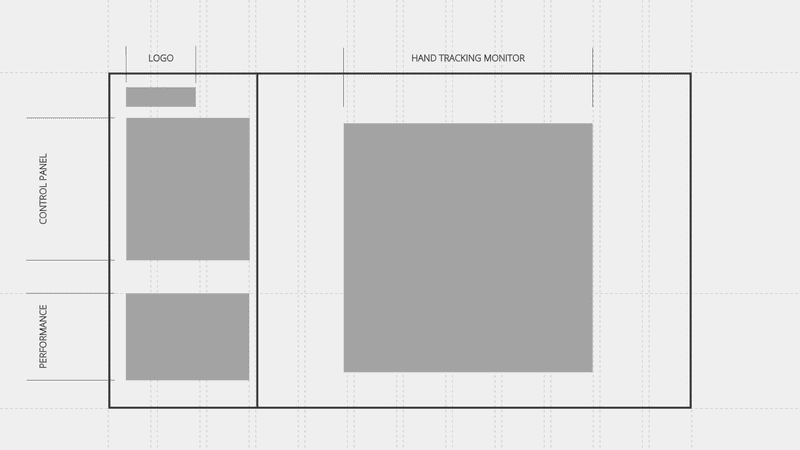

The app was built as a React.js application running in the browser. Before coding the app, I explored a few different layouts to ensure a good user experience. I divided the screen into two main areas: Hand Tracking monitor and Control Panel. The Hand Tracking Monitor is at the center of the page and gives visual feedback about the user’s hand orientation. The control panel allows the user to select a desired set of orientation values to use for sound shaping.

X position mapped to filter cutoff, Y position mapped to Resonance, Spread mapped to flanger rate